Private Agents¶

To run your tests within your own premises, you need to create an agent pool, and then start at least the number of agents required for the test. A typical test scenario A calls B requires two agents, one simulating the calling party, the other one simulating the called party.

Creating an agent pool¶

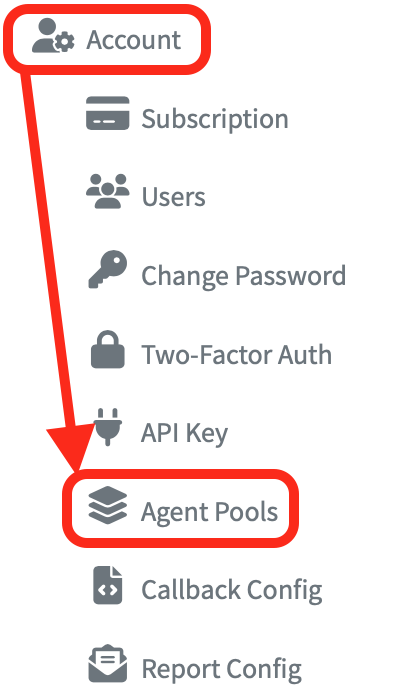

- Log into your account on the Sipfront dashboard and navigate to Agent Pools under the Account menu on the left.

-

Click the Add Agent Pool button on the top to create a new pool.

-

Give your pool a Name and a Description. This is the information you will later see when configuring a test, where you get the possibility to select the agent pool.

-

If you plan to perform load tests, you have to configure the maximum performance capacity per agent in this pool. This is important because you will run the agents on your own VMs or bare metal, and depending on the performance of the system you're running the agents on, the agent will be capable of producing and consuming a specific number of calls or requests per seconds, and a specific number of concurrent calls in parallel (which is specifically important for audio generation). The Sipfront orchestration system will then allocate the required amount of agents for the load you're targeting, based on the figures you configure here.

Tip

If in doubt, configure a rather high number like 50 calls/sec and 1000 concurrent calls here, and start with a moderate target load in your tests, so only one agent per side is utilized, then try to increase the target load until you feel like the agent is starting to fall over. Update your agent pool capacity by setting this (or a slightly smaller) number.

-

Store the config with the Save button.

-

At the bottom right of the detailed view, the interface now shows you the command to launch an agent in your own environment.

# Run agent in this pool using Docker:

docker run \

--init \

--pull always \

--env SF_POOL_ID="abcdefgh-1234-abcd-1234-abcdefgh1234" \

--env SF_POOL_SECRET="some-random-string" \

sipfront/agent:latest

Run agents in bridge mode¶

When running the Sipfront agent with the docker command noted above, the agent will launch in bridge mode, which means it will receive a private IP address within the docker network. This could be the intended behavior, if you would like to test how your system handles NATed user agents.

Run multiple agents in host mode¶

Host networking with Docker has the advantage to expose all network interfaces of the host system into the docker container. That way, there is no NAT in between the user agents simulated within the agent container, and the host system. If the host system has public IP addresses, the agent container can utilize these too, so there's also no NAT in between the UAs and the system under test.

However, when the whole network stack is shared between containers, we've to make sure there are no conflicts between ports the agents are binding to. In order to overcome this, you can override (or, specifically define) the ports and port ranges used by an agent using environment variables.

The following helper script will start a given number of agents, each using its own dedicated port range.

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 | |

-

The port at which to start allocating ports for agents. Make sure to not collide with the ephemeral port range used by your system.

-

The number of ports to use in total for the agent. For functional tests, this is sufficient. If you plan to do performance tests, you will need to allocate more ports (100 min plus 4 per concurrent call).

-

Makes sure to always use the latest agent version.

-

If the agent crashes for whatever reason, or if you reboot the host machine or restart docker, this makes sure that the agent is restarted automatically. This might or might not be intended in your case.

-

Run the agent in daemon mode. Usually wanted if you run multiple agents on a device, and want to keep them running for longer time.

-

Give the agent a meaningful name.

-

Use an init system inside your docker to avoid zombie processes on your host system.

-

This flag will switch the docker container to host mode, so all network interfaces will be exposed into your container.

Important

When running in host mode, make sure that your ports do not collide with the ephemeral port range of your system. You can obtain the range doing cat /proc/sys/net/ipv4/ip_local_port_range.

Info

While the agent runs fine in Docker on Mac in bridge mode, the host mode is not exactly what you might expect, because there is another layer of networking in between. To avoid any hassles, run agents in host mode on a Linux machine.

Collect agent logs in syslog¶

To collect the logs produced by the agent by default on stdout into syslog instead, add the following lines to your start script.

...

--log-driver syslog \

--log-opt "syslog-address=udp://127.0.0.1:514" \

--log-opt tag="{{.Name}}" \

--env SF_LOG_SYSLOG="127.0.0.1:514" \

...

This helps when running lots of agents on a single machine while allowing to preserve its logs for potential trouble-shooting.

Using a specific outbound IP¶

By default, an agent figures out its own public IP by doing a request to api.sipfront.com, which responds with the actual public (potentially NATed) IP of the agent. This works in environments with a standard NAT, and also in environments like an AWS EC2 instance with an advertised IP.

However, in some cases you might end up running agents on machines with multiple network interfaces and/or multiple IP addresses on an interface, and you'd like to use an IP address which is not related to the default route. An example is a host machine with a public IP or at least a default route to the public Internet, and a tun-interface for a VPN. If that specific IP should be used by the agent to communicate with the system under test, you can set it using these environment flags when starting the docker container for the agent:

...

--env SF_EGRESS_IP="10.11.12.13" \

--env SF_PUBLIC_IP="10.11.12.13" \

...

You can also set the SF_PUBLIC_IP alone if you'd like to control which IP address should be coded into the outbound SIP messages itself, regardless of the actual IP routing. The agent uses this mechanism by default on systems like EC2, where the IP of the host is a private IP, but there is the notion of the advertised ip address, which needs to be set e.g. in the Contact/Via/Record-Route or the SDP c-attribute if the far end does not support NAT traversal.

Required domains/ports (Firewall)¶

In order to allow the agent to communicate with the Sipfront Cloud, the following domain + port combinations need to be opened with FQDN rules on the customer firewall.

| Domain | Protocol | Port |

|---|---|---|

mqtt.sipfront.net | tcp | 443 |

siptrace.sipfront.net | udp | 9060 |

siptrace.sipfront.net | tcp | 9061/9062 |

api.sipfront.com | tcp | 443 |